PART 1: FINDING MAPS IN ALBUM COVER ART USING AI

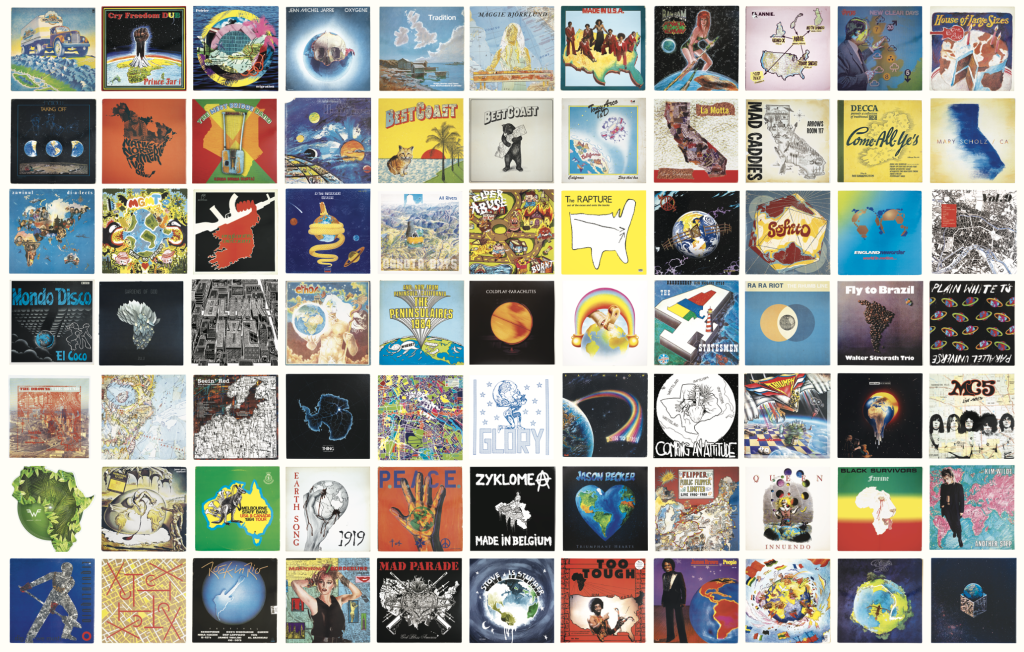

In the middle of last year, I released Maps on Vinyl: An Atlas of Album Cover Maps. The book showcases 415 vinyl album covers and the shares the stories behind them. The collection of albums was built up over a decade of visiting physical and digital record stores, thrift shops, garage sales, and the occasional generous gift from friends and fellow record collectors.

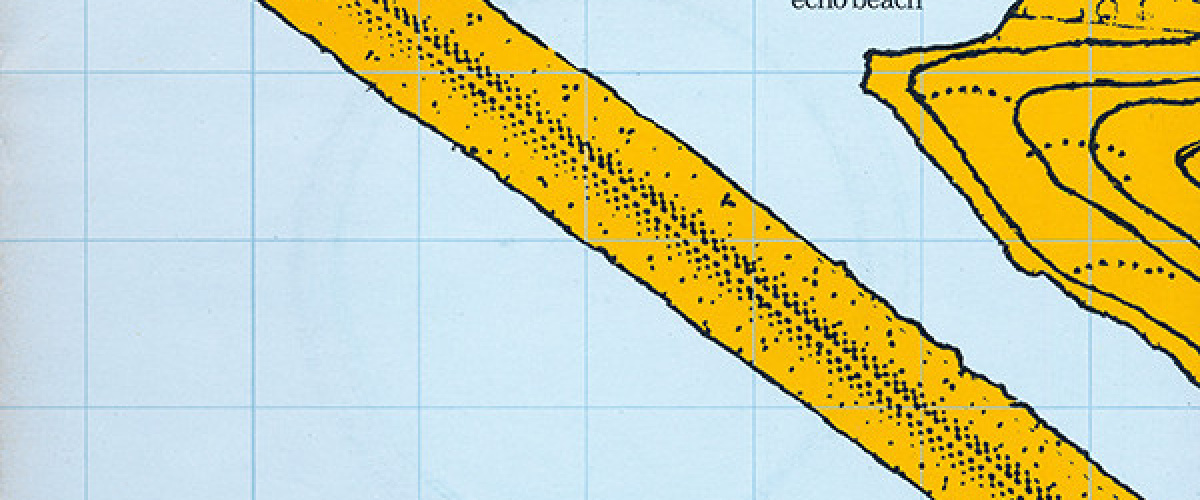

Building the collection was definitely an adventure, but at times it felt like a needle in a haystack mission. For over ten years, I sifted through hundreds of thousands of records to identify those rare sleeves that incorporated cartography into their design. I would learn that approx. 1 in every 500 vinyl album covers featured a map on them of some kind. But as AI advanced during the production of the book, I started to wonder: could I automate this search using AI? Is it possible to find map-based album cover art using AI?

This post aims to provide a map designer’s insight into that journey, the technical hurdles cleared, and the reality of how AI perceives art in a 30 cm x 30 cm space.

The Question

My research question was simple—well at least I thought so:

Can a 2026 version of AI—specifically computer vision-language models—successfully distinguish cartographic elements from other artistic textures on an album cover?

Test Data

- A mix of .jpg and .png’s

- Total Images: 894

- 415 contained a map

- 479 did not contain a map

- Average Resolution: 608 x 603 px

- Average File Size: 155.96 KB

Objective

My primary goal was to feed AI potentially thousands of images and recall all of the covers with maps on them. If this meant AI returning a high percentage of ‘False Positives’ (non-map covers that I’d have to delete or skip over) then I was OK with that. I figured it was easier to delete non-map covers than have AI miss covers with maps on them. I wanted to ensure the system was built to ‘overestimate’ (as they say in AI). For example, if AI sees a pattern that it thinks is a coastline or a street grid my preference was for it to tag it as a ‘map’.

Choosing the ‘best’ AI model for the project

As this was meant to be a holiday exercise to feed my curiosity—and to take away some of the monotony of sifting through bucket-loads of albums covers online, I wasn’t interested in manually training an AI model on potentially thousands of images where I would be required to specifically labeled each part of cover as a ‘map’ or ‘not map’. That felt like too much work for someone who was in holiday mode ;).

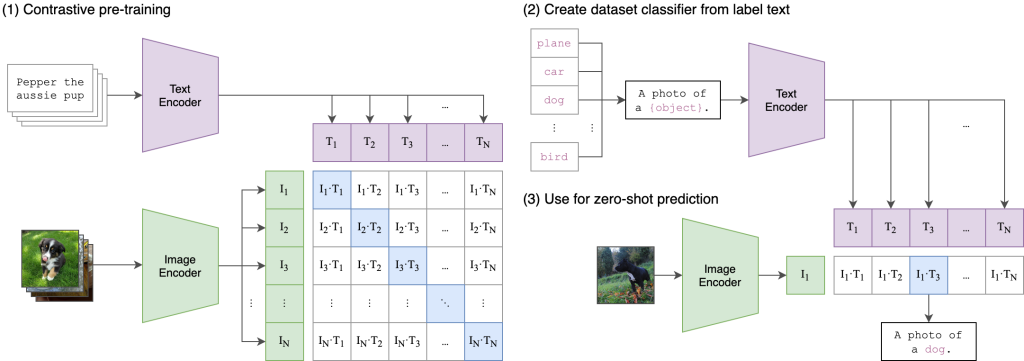

I was hoping that I could leverage an existing AI that could start finding maps right away. After searching the web, and reading various articles and consulting with Gemini, I landed on ChatGPT’s neural network called CLIP. ChatGPT claims it “efficiently learns visual concepts from natural language supervision. CLIP can be applied to any visual classification benchmark by simply providing the names of the visual categories to be recognised”.

The documentation also clearly outlined CLIP’s imitations. “While CLIP usually performs well on recognizing common objects, it struggles on more abstract or systematic tasks”, and “we’ve observed that CLIP’s zero-shot classifiers can be sensitive to wording or phrasing and sometimes require trial and error “prompt engineering” to perform well”, which I would learn to be true. Finally, the makers of CLIP acknowledge that it may only achieve 88% accuracy on images not covered in its pre-training set. It’s not clear if maps were included as part of its training set.

So how reliably could CLIP find a map by ‘reading’ a set of natural language prompts and pairing them with their mathematical signature of a map? I was about to find out…

This is Part 1 of a 3-part series. In Part 2, I’ll share the natural language prompts, scoring and threshold settings that were used to try and identify album cover maps using CLIP.